Challenge

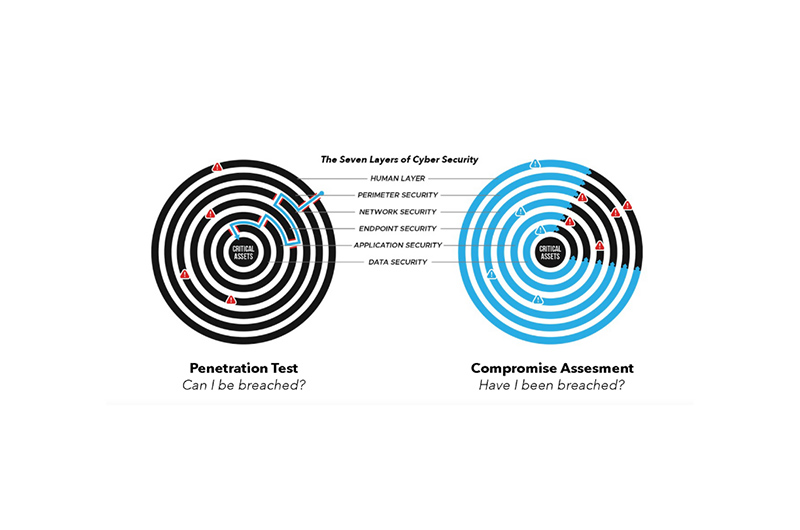

The organisation was a MAS-regulated financial institution. That designation carries specific obligations under the MAS Technology Risk Management guidelines: defence-in-depth across system layers, formal governance of changes that affect security controls and, if something goes wrong, the obligation to report incidents and demonstrate that the architecture met the required standard. These are the framework against which the organisation’s decisions, including the one that enabled this incident, would eventually be assessed.

The firm’s internal systems handled sensitive customer data as a matter of routine. The APIs serving this data were designed for system-to-system communication and had never been built with external authentication in mind. They did not need to be — the protection sat at the network perimeter, in the form of access controls that restricted the endpoints to known internal sources and kept them invisible to the internet.

In late 2025, those controls were removed. The change was deliberate, made for operational reasons, without a full assessment of what it left exposed. No compensating control was introduced elsewhere in the architecture. The internal endpoints, suddenly reachable from the public internet, had nothing to check whether someone calling for access had any right to be there.

This is precisely the architecture that MAS Technology Risk Management guidelines are designed to prevent. Defence-in-depth — the principle that security should not collapse entirely when a single control fails or changes — is an explicit requirement for regulated financial institutions. A system whose only protection is a perimeter rule that an operational change can silently remove is a system with a single point of failure. Under MAS expectations, that is not a configuration gap. It is a governance failure.

The exposure sat undetected for weeks. What eventually triggered the alert was not a human catching something suspicious — it was sheer volume. Automated scripts had been sending tens of thousands of requests to internal data endpoints, and the logs eventually crossed a threshold that could not be ignored. By then, the damage was done.

Solution

1. Evidence acquisition and scope definition

Blackpanda’s first task was to establish what the logs could prove. Evidence across three independent telemetry sources provided overlapping coverage of the incident window. The team correlated these sources by timeline, endpoint path, and behavioural pattern rather than by a persistent request identifier, which the architecture did not preserve end-to-end. This approach enabled high-confidence reconstruction of the activity sequence without one-to-one request lineage across all layers. A complete inventory of collected evidence was compiled and retained for regulatory purposes.

2. Incident window and request analysis

Log analysis identified tens of thousands of HTTP requests originating from a small number of external IP addresses during the review period — of which approximately 99% targeted the internal API namespace. Within the defined incident window of roughly seventeen hours, the vast majority of those requests returned successful responses, confirming that the backend application processed them and returned data. Two IP addresses accounted for over 99% of activity; the remainder contributed isolated, low-volume probing.

3. Endpoint-level behavioural reconstruction

A small subset of endpoints accounted for over 99% of confirmed data returned. Analysis of request patterns and response characteristics established a two-phase behavioural model. In the first phase, the scripts called a user listing endpoint repeatedly, using pagination parameters to retrieve batches of records — repeated calls, each returning substantial volumes of structured user data. In the second phase, individual user identifiers obtained through the listing were used to query a profile endpoint directly, generating tens of thousands of successful calls across a broad population of distinct user accounts. Concurrently, additional endpoints serving PII and business data were queried across comparable account populations.

4. Data category assessment

Data categories were assessed through confirmed endpoint invocation, response characteristics, and system functionality documentation provided by the organisation. The information potentially accessed fell across two classifications: PII data and business data.

5. Threat actor assessment and attribution

Behavioural analysis confirmed that the activity was automation-driven. Approximately 67% of requests used explicit scripting library user-agent strings; a further 33% used an identical, static browser-style header reused at scale — inconsistent with organic browsing. No indicators of victim-specific reconnaissance, bespoke tooling, persistence mechanisms, privilege escalation, or lateral movement were identified. The source infrastructure — residential ISP address space, a commercial VPN node, and public cloud ranges — was broadly available. The activity was assessed as opportunistic exploitation of an exposed interface rather than a targeted intrusion.

6. Root cause analysis and containment validation

The architecture had no defence-in-depth: perimeter restriction was the trust boundary and, once it was gone, nothing remained to enforce it. The investigation established that the exposure reflected not a single operational misstep but a systemic failure — an architecture built for convenience rather than security, in which no redundancy existed to catch a configuration change before it became a breach. Post-containment telemetry confirmed that following the organisation’s remediation measures, external requests to the affected namespaces were denied at the perimeter layer and no further successful responses were observed.

Results

1. Scope of the data exposure established

The investigation confirmed that tens of thousands of user accounts were referenced during the incident window, with records potentially accessed across PII and business data categories. The activity was highly concentrated rather than broadly distributed across the exposed namespace, establishing a clear and bounded exposure footprint.

2. Containment validated as complete

Post-remediation telemetry confirmed that the specific exposure condition had been resolved at the ingress layer. Following containment, external requests to the affected internal namespaces returned denial responses, and no further successful data retrieval was observed. The forensic review validated the organisation’s own response measures as effective at closing the immediate exposure window.

3. Root cause established as a systemic failure

The investigation determined that the trigger — removal of access controls at the network perimeter layer — was the proximate cause, but not the full picture. The deeper finding was architectural: a system whose security depended entirely on a single perimeter control, with no application-layer authentication, no redundancy, and no governance process to assess the downstream impact of a configuration change before it was made. The organisation had not built security into its architecture; it had built around it. That distinction, established clearly in the forensic record, is material to any regulatory submission — and to any credible remediation programme.

4. MAS regulatory engagement supported

The forensic findings gave the organisation a technically grounded and defensible account of the incident for submission to the Monetary Authority of Singapore. MAS requires regulated financial institutions to report material data breaches, submit a root cause analysis within 14 days of discovery, and demonstrate the nature of the failure, its scope, and the adequacy of the response. Blackpanda’s investigation established all three: the root cause was a specific, identifiable control change compounded by systemic architectural weakness; the scope was bounded and quantified through log analysis; and the containment measures were validated as effective. The threat actor characterisation — opportunistic, automated, non-targeted — was supported by behavioural evidence rather than assertion, which materially strengthens a regulatory submission.

5. Prioritised recommendations delivered

Blackpanda provided a structured set of recommendations across three implementation horizons: immediate perimeter and namespace controls, structural hardening at the application and data layers, and governance practices for ongoing exposure management. These included enforcing deny-by-default ingress policies, segregating internal API namespaces from public routing paths, implementing application-layer authentication independent of network-level controls, and introducing field-level data minimisation and response masking. Together, they addressed both the specific failure mode that enabled this incident and the broader architectural conditions that made it consequential.

The case reinforces a principle that recurs across API security incidents: defence that collapses entirely when a single configuration changes is not defence — it is a single point of failure with a tidy label. Organisations whose internal APIs serve sensitive data need authentication and access control at the application layer regardless of what the network perimeter is doing, because network perimeters change, and they do not always announce it.

Frequently Asked Questions

1. What is an internal API, and why would it be exposed to the internet?

An API — Application Programming Interface — is a defined channel through which software systems exchange data. Internal APIs are built for communication between services within the same application or infrastructure; they handle backend operations and are not intended for external users. They end up exposed to the internet through misconfiguration: a routing rule set too broadly, a firewall allowlist removed without assessing downstream impact, or an architectural assumption that perimeter controls are permanent. In this case, the removal of a single IP allowlist at the network perimeter layer was enough. The organisation’s internal APIs had never been built to authenticate external requests, because the design assumed they would never see one.

2. Was this a targeted attack against the organisation?

No. The forensic evidence is consistent with opportunistic, automated exploitation. Attackers deploy scripts to scan the internet for accessible interfaces and extract data from whatever they find open. There was no indication of victim-specific reconnaissance, advanced tooling, or deliberate targeting of this particular organisation. The threat actor appeared to have found an exposed endpoint and kept pulling data until access was cut.

The seriousness of the impact had more to do with what the endpoints served than with who the attacker was.

3. What are the organisation’s obligations to MAS when a breach like this occurs?

MAS-regulated financial institutions are subject to Technology Risk Management notice requirements that mandate notification to MAS no more than one hour after discovering a relevant incident, and submission of a root cause analysis report within 14 days. A data exposure affecting tens of thousands of customer records — including identity and financial information — would meet the threshold for mandatory notification.

Beyond the immediate reporting requirement, MAS expects institutions to demonstrate that adequate IT controls were in place to protect customer information from unauthorised access or disclosure — and where they were not, to show that remediation was timely and proportionate.

Non-compliance carries penalties of up to SGD 1 million under the Financial Services and Markets Act, with higher exposure where a breach reveals multiple infractions. This is why the quality of forensic documentation matters: a regulatory submission that cannot precisely characterise the root cause, bound the scope of exposure, or validate containment will invite much closer scrutiny than one that can.

Organisations that want to understand the broader framework can refer to the MAS Technology Risk Management Guidelines, which set out best practice standards alongside the binding notice requirements.

4. How did the attacker know which internal endpoints to call?

Initial requests probed API documentation interfaces — endpoints that describe an application’s available routes and parameters, used by developers to understand and test the system. These documentation endpoints were also publicly reachable. Once the scripts had mapped the internal namespace through documentation, they transitioned to systematic querying of data-retrieval endpoints. The organisation assessed that API documentation associated with a non-production environment may have been accessible during this period, which would have further simplified discovery. Restricting API documentation and developer-facing interfaces from public access is a standard hardening measure for exactly this reason; see OWASP API9 – Improper Inventory Management for the underlying pattern.

5. What stopped the attack, and how was it confirmed that the exposure was closed?

The organisation implemented updated ingress and routing controls to block public access to the internal API namespace. Post-remediation telemetry — reviewed by Blackpanda as part of the containment validation — confirmed that external requests to the affected paths were denied at the perimeter layer and that no further successful responses were returned. The investigation did not identify any evidence of continued access following containment.

6. What should other organisations take away from this incident?

The two most transferable lessons are structural. First: internal APIs that handle sensitive data should enforce application-layer authentication independently of whatever network controls surround them. If removing a firewall rule would expose unprotected data endpoints, the architecture has a single point of failure. Second: API documentation and developer tooling should not be publicly reachable in production. In this case, documentation interfaces appeared to have assisted the attacker’s enumeration of the internal namespace. Both issues are addressed in the OWASP API Security Top 10 — a practical reference for any organisation assessing its own API exposure. Organisations that want to understand their current attack surface before an incident like this forces the question can explore Blackpanda’s compromise assessment services.

What This Means for Your Organisation

For a MAS-regulated financial institution, this incident carries two distinct consequences that are difficult to separate. The first is operational: customer data was accessed, containment was required, and a forensic investigation had to establish what happened and to whom. The second is regulatory: MAS expects its regulated institutions to maintain defence-in-depth, to govern configuration changes that touch security controls, and to report and account for material breaches when they occur. In this case, the architectural failure — relying on a single perimeter control as the sole barrier between internal data endpoints and the internet — implicated both consequences at once.

The MAS Technology Risk Management guidelines are explicit on this point. Defence-in-depth is not a best practice recommendation; it is a compliance requirement. An internal API that handles personal and financial customer data must have authentication enforced at the application layer, independent of whatever network controls surround it. When the network control changes — and operational environments change constantly — the application layer should still hold. Here, it did not, because it was never built to.

The practical question for any Singapore-regulated financial institution is whether the same condition exists in its own environment. Internal APIs that were designed for private infrastructure and never given external-facing authentication are common. So are perimeter controls that get quietly modified as systems evolve. The gap between those two facts is where incidents like this one live. Blackpanda’s IR-1 response assurance subscription includes Attack Surface Readiness (ASR), providing the intelligence to surface exactly this kind of exposure before a regulatory notification is the first sign that something went wrong.

About Blackpanda

Blackpanda is a Lloyd’s of London–accredited insurance coverholder and Asia’s leading local cyber incident response firm, delivering end-to-end digital emergency support across the region. We are pioneering the A2I (Assurance-to-Insurance) model in cybersecurity — uniting preparation, response, and insurance into a seamless pathway that minimises financial and operational impact from cyber attack. Through expert consulting services, response assurance subscriptions, and innovative cyber insurance, we help organisations get ready, respond, and recover from cyber attacks — all delivered by local specialists working in concert.

Our mission is clear: to bring complete cyber peace of mind to every organisation in Asia, from the first moment of breach through full recovery and beyond.